During this week’s lesson, I recalled that when I was a kid, my dad told me how the first computers took up an entire room just to be able to perform what we would consider very simple tasks. This always amazed me, because growing up with cellphones around, I couldn’t image why a computer would need to be so large. In our most recent lesson in class, we learned about how machine learning works, and comparing the complexity behind it, now I can’t imagine how computers have become so much smaller yet so much more powerful.

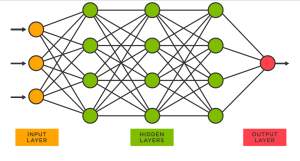

We looked at an example in class to explain how machine learning uses artificial neural networks (ANN) to perform tasks for us. This example was that we wanted an ANN to identify if an image containing 20 pixels was representing an even or an odd one digit number. In order to do this, the ANN requires 20 input nodes, one for each pixel. This input node would tell the ANN if its assigned pixel was shaded in or not, in the form of a binary code. The next step in this process is for the binary code from each node to be assigned a weight, which ideally corresponds to how likely it is that either of the binary signals corresponds to an even or an odd number. At first these weights are given randomly, but with back propagation, the ANN can backtrack and reassign weights to change the outcome based on us telling the ANN if the output is correct or not. This can happen countless times, and gets more complex as more input nodes and more hidden layers, which is where the weights are assigned to each input node, occurs. Below is a diagram from a Linkedin article that shows a simple ANN with two hidden layers between the input nodes and the output nodes, which is where we get a response back from the machine.